Introduction Appendix Cooperative Groups describes synchronization primitives for various groups of CUDA threads. Appendix CUDA Dynamic Parallelism describes how to launch and synchronize one kernel from another. Appendix Mathematical Functions lists the mathematical functions supported in CUDA. Appendix C/C++Language Support lists the C++features supported in device code. Appendix Texture Fetching gives more details on texture fetching Appendix Compute Capabilities gives the technical specifications of various devices, as well as more architectural details. Appendix Driver API introduces the low-level driver API. Appendix CUDA Environment Variables lists all the CUDA environment variables. Appendix Unified Memory Programming introduces the Unified Memory programming model. www.nvidia.com CUDA C Programming Guide PG-02829-001v9.2|7

Introduction www.nvidia.com CUDA C Programming Guide PG-02829-001_v9.2 | 7 ‣ Appendix Cooperative Groups describes synchronization primitives for various groups of CUDA threads. ‣ Appendix CUDA Dynamic Parallelism describes how to launch and synchronize one kernel from another. ‣ Appendix Mathematical Functions lists the mathematical functions supported in CUDA. ‣ Appendix C/C++ Language Support lists the C++ features supported in device code. ‣ Appendix Texture Fetching gives more details on texture fetching ‣ Appendix Compute Capabilities gives the technical specifications of various devices, as well as more architectural details. ‣ Appendix Driver API introduces the low-level driver API. ‣ Appendix CUDA Environment Variables lists all the CUDA environment variables. ‣ Appendix Unified Memory Programming introduces the Unified Memory programming model

Chapter 2. PROGRAMMING MODEL This chapter introduces the main concepts behind the CUDA programming model by outlining how they are exposed in C.An extensive description of CUDA C is given in Programming Interface. Full code for the vector addition example used in this chapter and the next can be found in the vectorAdd CUDA sample. 2.1.Kernels CUDA C extends C by allowing the programmer to define C functions,called kernels, that,when called,are executed N times in parallel by N different CUDA threads,as opposed to only once like regular C functions. A kernel is defined using the global declaration specifier and the number of CUDA threads that execute that kernel for a given kernel call is specified using a new <<<...>>execution configuration syntax(see C Language Extensions).Each thread that executes the kernel is given a unique thread ID that is accessible within the kernel through the built-in threadIdx variable. As an illustration,the following sample code adds two vectors A and B of size N and stores the result into vector C: /Kernel definition _global_void VecAdd(float*A,float+B,float+C) int i threadIdx.x; C[i]=A[i]+B[i]; int main() /Kernel invocation with N threads VecAdd<<<1,N>>>(A,B,C); Here,each of the N threads that execute vecAdd()performs one pair-wise addition. www.nvidia.com CUDA C Programming Guide PG-02829-001v9.2|8

www.nvidia.com CUDA C Programming Guide PG-02829-001_v9.2 | 8 Chapter 2. PROGRAMMING MODEL This chapter introduces the main concepts behind the CUDA programming model by outlining how they are exposed in C. An extensive description of CUDA C is given in Programming Interface. Full code for the vector addition example used in this chapter and the next can be found in the vectorAdd CUDA sample. 2.1. Kernels CUDA C extends C by allowing the programmer to define C functions, called kernels, that, when called, are executed N times in parallel by N different CUDA threads, as opposed to only once like regular C functions. A kernel is defined using the __global__ declaration specifier and the number of CUDA threads that execute that kernel for a given kernel call is specified using a new <<<...>>> execution configuration syntax (see C Language Extensions). Each thread that executes the kernel is given a unique thread ID that is accessible within the kernel through the built-in threadIdx variable. As an illustration, the following sample code adds two vectors A and B of size N and stores the result into vector C: // Kernel definition __global__ void VecAdd(float* A, float* B, float* C) { int i = threadIdx.x; C[i] = A[i] + B[i]; } int main() { ... // Kernel invocation with N threads VecAdd<<<1, N>>>(A, B, C); ... } Here, each of the N threads that execute VecAdd() performs one pair-wise addition

Programming Model 2.2.Thread Hierarchy For convenience,threadIdx is a 3-component vector,so that threads can be identified using a one-dimensional,two-dimensional,or three-dimensional thread index,forming a one-dimensional,two-dimensional,or three-dimensional block of threads,called a thread block.This provides a natural way to invoke computation across the elements in a domain such as a vector,matrix,or volume. The index of a thread and its thread ID relate to each other in a straightforward way: For a one-dimensional block,they are the same;for a two-dimensional block of size(D D),the thread ID of a thread of index(x,y)is(x+yD);for a three-dimensional block of size (Dx,Du D),the thread ID of a thread of index(x,y,z)is(x+y Dx+z Dx Du). As an example,the following code adds two matrices A and B of size NxN and stores the result into matrix C: /Kernel definition -global_void MatAdd(float A[N][N],float B[N][N], float C[N][N]) int i threadIdx.x; int threadIdx.y; c[i][j]=A[i][j]+B[i][j]: int main() Kernel invocation with one block of N+N+1 threads int numBlocks 1; dim3 threadsPerBlock(N,N); MatAdd<<<numBlocks,threadsPerBlock>>>(A,B,C); ””” There is a limit to the number of threads per block,since all threads of a block are expected to reside on the same processor core and must share the limited memory resources of that core.On current GPUs,a thread block may contain up to 1024 threads. However,a kernel can be executed by multiple equally-shaped thread blocks,so that the total number of threads is equal to the number of threads per block times the number of blocks. Blocks are organized into a one-dimensional,two-dimensional,or three-dimensional grid of thread blocks as illustrated by Figure 6.The number of thread blocks in a grid is usually dictated by the size of the data being processed or the number of processors in the system,which it can greatly exceed. www.nvidia.com CUDA C Programming Guide PG-02829-001v9.2|9

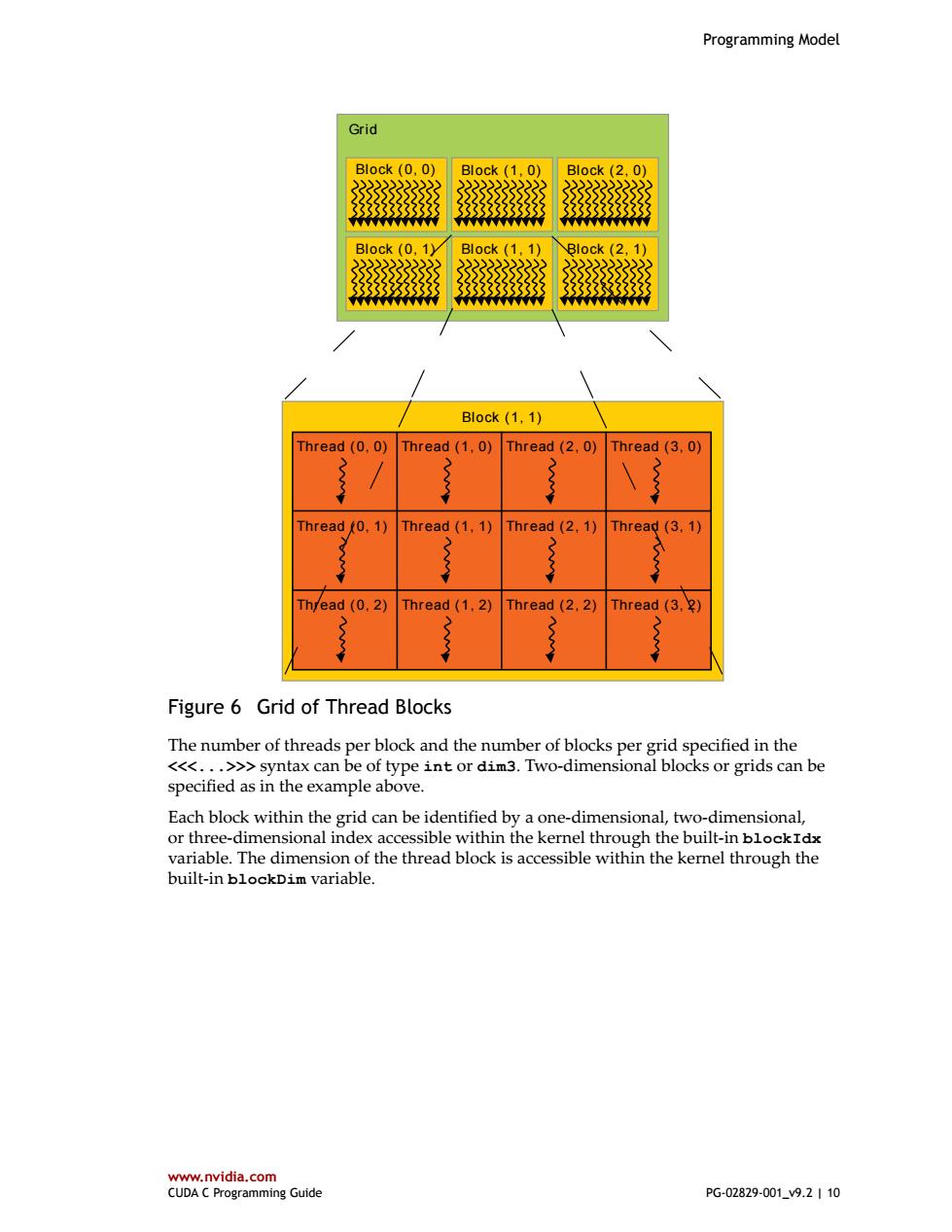

Programming Model www.nvidia.com CUDA C Programming Guide PG-02829-001_v9.2 | 9 2.2. Thread Hierarchy For convenience, threadIdx is a 3-component vector, so that threads can be identified using a one-dimensional, two-dimensional, or three-dimensional thread index, forming a one-dimensional, two-dimensional, or three-dimensional block of threads, called a thread block. This provides a natural way to invoke computation across the elements in a domain such as a vector, matrix, or volume. The index of a thread and its thread ID relate to each other in a straightforward way: For a one-dimensional block, they are the same; for a two-dimensional block of size (Dx, Dy),the thread ID of a thread of index (x, y) is (x + y Dx); for a three-dimensional block of size (Dx, Dy, Dz ), the thread ID of a thread of index (x, y, z) is (x + y Dx + z Dx Dy). As an example, the following code adds two matrices A and B of size NxN and stores the result into matrix C: // Kernel definition __global__ void MatAdd(float A[N][N], float B[N][N], float C[N][N]) { int i = threadIdx.x; int j = threadIdx.y; C[i][j] = A[i][j] + B[i][j]; } int main() { ... // Kernel invocation with one block of N * N * 1 threads int numBlocks = 1; dim3 threadsPerBlock(N, N); MatAdd<<<numBlocks, threadsPerBlock>>>(A, B, C); ... } There is a limit to the number of threads per block, since all threads of a block are expected to reside on the same processor core and must share the limited memory resources of that core. On current GPUs, a thread block may contain up to 1024 threads. However, a kernel can be executed by multiple equally-shaped thread blocks, so that the total number of threads is equal to the number of threads per block times the number of blocks. Blocks are organized into a one-dimensional, two-dimensional, or three-dimensional grid of thread blocks as illustrated by Figure 6. The number of thread blocks in a grid is usually dictated by the size of the data being processed or the number of processors in the system, which it can greatly exceed

Programming Model Grid B1ock(0,0) Block (1.0) Block(2,0) 3333333333 333333333 Block (0,1) Block (1,1) Block (2,1) 2222222 3333333333 Block(1,1) Thread (0.0)Thread (1.0) Thread(2,0)Thread(3.0) Thread (0,1)Thread(1.1) Thread(2.1) Thread(3,1) Th/ead (0.2) Thread (1,2) Thread(2,2) Thread(3,2) Figure 6 Grid of Thread Blocks The number of threads per block and the number of blocks per grid specified in the <<<...>>syntax can be of type int or dim3.Two-dimensional blocks or grids can be specified as in the example above. Each block within the grid can be identified by a one-dimensional,two-dimensional, or three-dimensional index accessible within the kernel through the built-in blockIdx variable.The dimension of the thread block is accessible within the kernel through the built-in blockDim variable. www.nvidia.com CUDA C Programming Guide PG-02829-001_v9.2|10

Programming Model www.nvidia.com CUDA C Programming Guide PG-02829-001_v9.2 | 10 Grid Block (1, 1) Thread (0, 0) Thread (1, 0) Thread (2, 0) Thread (3, 0) Thread (0, 1) Thread (1, 1) Thread (2, 1) Thread (3, 1) Thread (0, 2) Thread (1, 2) Thread (2, 2) Thread (3, 2) Block (0, 1) Block (1, 1) Block (2, 1) Block (0, 0) Block (1, 0) Block (2, 0) Figure 6 Grid of Thread Blocks The number of threads per block and the number of blocks per grid specified in the <<<...>>> syntax can be of type int or dim3. Two-dimensional blocks or grids can be specified as in the example above. Each block within the grid can be identified by a one-dimensional, two-dimensional, or three-dimensional index accessible within the kernel through the built-in blockIdx variable. The dimension of the thread block is accessible within the kernel through the built-in blockDim variable

Programming Model Extending the previous MatAdd()example to handle multiple blocks,the code becomes as follows. /Kernel definition global void MatAdd(float A[N][N],float B[N][N], Float c[N叮[N]) int i blockIdx.x blockDim.x threadIdx.x; int j=blockIdx.y blockDim.y threadIdx.y; if (i N &8 j N) C[i][j]=A[i][j]+B[i][j]: int main() /Kernel invocation dim3 threadsPerBlock(16,16); dim3 numBlocks(N threadsPerBlock.x,N threadsPerBlock.y); MatAdd<<<numBlocks,threadsPerBlock>>>(A,B,C); A thread block size of 16x16(256 threads),although arbitrary in this case,is a common choice.The grid is created with enough blocks to have one thread per matrix element as before.For simplicity,this example assumes that the number of threads per grid in each dimension is evenly divisible by the number of threads per block in that dimension, although that need not be the case. Thread blocks are required to execute independently:It must be possible to execute them in any order,in parallel or in series.This independence requirement allows thread blocks to be scheduled in any order across any number of cores as illustrated by Figure 5,enabling programmers to write code that scales with the number of cores. Threads within a block can cooperate by sharing data through some shared memory and by synchronizing their execution to coordinate memory accesses.More precisely,one can specify synchronization points in the kernel by calling the syncthreads() intrinsic function;syncthreads()acts as a barrier at which all threads in the block must wait before any is allowed to proceed.Shared Memory gives an example of using shared memory.In addition to synethreads(),the Cooperative Groups API provides a rich set of thread-synchronization primitives. For efficient cooperation,the shared memory is expected to be a low-latency memory near each processor core(much like an L1 cache)and synethreads()is expected to be lightweight. 2.3.Memory Hierarchy CUDA threads may access data from multiple memory spaces during their execution as illustrated by Figure 7.Each thread has private local memory.Each thread block has shared memory visible to all threads of the block and with the same lifetime as the block. All threads have access to the same global memory. There are also two additional read-only memory spaces accessible by all threads:the constant and texture memory spaces.The global,constant,and texture memory spaces are optimized for different memory usages(see Device Memory Accesses).Texture www.nvidia.com CUDA C Programming Guide PG-02829-001v9.2|11

Programming Model www.nvidia.com CUDA C Programming Guide PG-02829-001_v9.2 | 11 Extending the previous MatAdd() example to handle multiple blocks, the code becomes as follows. // Kernel definition __global__ void MatAdd(float A[N][N], float B[N][N], float C[N][N]) { int i = blockIdx.x * blockDim.x + threadIdx.x; int j = blockIdx.y * blockDim.y + threadIdx.y; if (i < N && j < N) C[i][j] = A[i][j] + B[i][j]; } int main() { ... // Kernel invocation dim3 threadsPerBlock(16, 16); dim3 numBlocks(N / threadsPerBlock.x, N / threadsPerBlock.y); MatAdd<<<numBlocks, threadsPerBlock>>>(A, B, C); ... } A thread block size of 16x16 (256 threads), although arbitrary in this case, is a common choice. The grid is created with enough blocks to have one thread per matrix element as before. For simplicity, this example assumes that the number of threads per grid in each dimension is evenly divisible by the number of threads per block in that dimension, although that need not be the case. Thread blocks are required to execute independently: It must be possible to execute them in any order, in parallel or in series. This independence requirement allows thread blocks to be scheduled in any order across any number of cores as illustrated by Figure 5, enabling programmers to write code that scales with the number of cores. Threads within a block can cooperate by sharing data through some shared memory and by synchronizing their execution to coordinate memory accesses. More precisely, one can specify synchronization points in the kernel by calling the __syncthreads() intrinsic function; __syncthreads() acts as a barrier at which all threads in the block must wait before any is allowed to proceed. Shared Memory gives an example of using shared memory. In addition to __syncthreads(), the Cooperative Groups API provides a rich set of thread-synchronization primitives. For efficient cooperation, the shared memory is expected to be a low-latency memory near each processor core (much like an L1 cache) and __syncthreads() is expected to be lightweight. 2.3. Memory Hierarchy CUDA threads may access data from multiple memory spaces during their execution as illustrated by Figure 7. Each thread has private local memory. Each thread block has shared memory visible to all threads of the block and with the same lifetime as the block. All threads have access to the same global memory. There are also two additional read-only memory spaces accessible by all threads: the constant and texture memory spaces. The global, constant, and texture memory spaces are optimized for different memory usages (see Device Memory Accesses). Texture